The Most Dangerous Document in Your Organization

There’s a dangerous document sitting in nearly every organization right now titled something like "AI Acceptable Use Policy." Legal reviewed it. Compliance signed off. The board got a summary. Everyone moved on convinced that AI governance was handled.

It’s not.

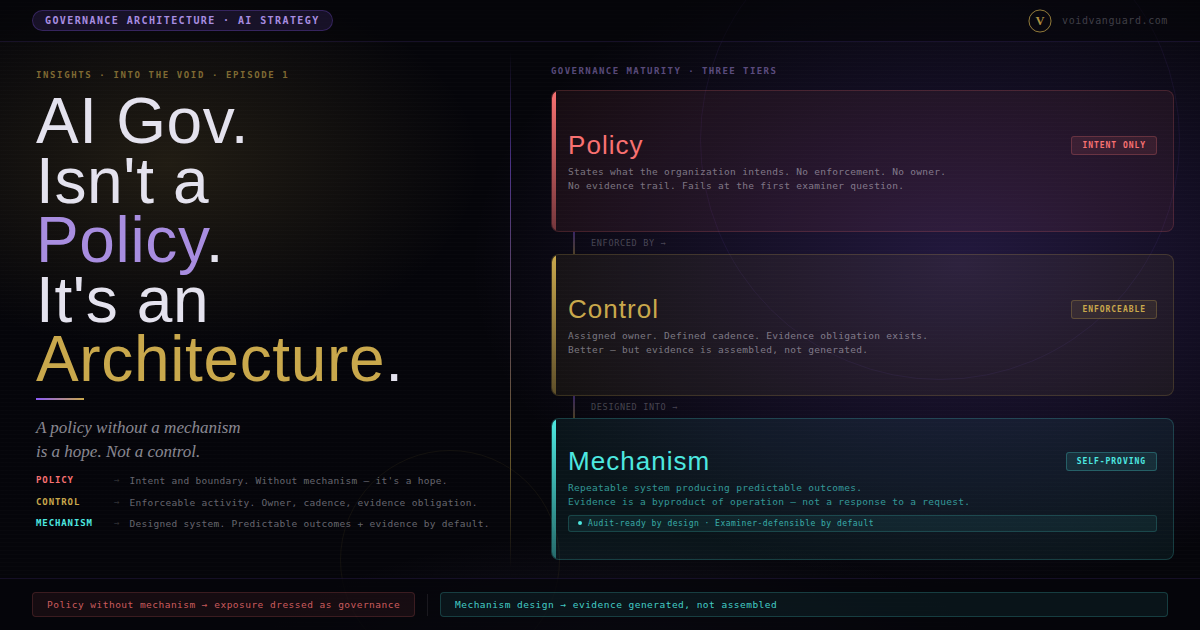

The policy isn’t badly written. The people who created it cared about the work. The problem is structural: a policy without architecture is a suggestion with a signature line. In regulated environments, suggestions do not survive examination.

What is governance architecture? Governance architecture is the set of controls, evidence artifacts, and feedback loops that turn a policy declaration into an enforceable, verifiable outcome. A policy says what should happen. An architecture is the system that makes it happen and generates the evidence that proves it happened, as a byproduct of operation. In federally regulated institutions, architecture is the difference between a program that survives examination and a program that produces findings.

The Pattern

The sequence is almost always the same. An organization decides it needs to "address AI." A working group forms. A policy is drafted. Legal reviews it. The board is briefed. The box gets checked.

Nothing actually changed.

The policy says employees should use AI responsibly. It says they shouldn’t paste sensitive data into public models. It might reference "approved tools." What it does not say is how anyone is supposed to know whether people are following it.

There’s no mechanism to detect noncompliance. No evidence trail that proves adherence. No control that enforces the boundary. There’s a document. And hope.

In regulated environments, hope is not a control.

Policy vs. Architecture: A Structural Distinction

This isn’t semantics. It’s structural.

A policy is a declaration of intent. It says: here’s what we expect, what we allow, where the boundaries are.

An architecture is the system that makes those declarations real the controls, the evidence, the feedback loops that turn intent into enforcement.

Every organization reading this almost certainly has a logical access policy. It says who should have access to what, based on role and need. That policy has existed for years.

The policy didn’t stop role explosion. It didn’t prevent orphaned accounts. It didn’t catch the contractor who still had admin access six months after the project ended.

Architecture caught those things. SailPoint IdentityNow running joiner-mover-leaver automation against the authoritative HR feed. CyberArk vaulting privileged credentials with session recording. Access certification campaigns with real attestation workflows, structural escalation, and auto-revocation at day 15. Not a policy document. A system that produced the outcome regardless of whether any individual manager remembered the quarterly review was open.

The policy said what should happen. The architecture made it happen. When the examiner showed up, they didn’t ask to see the policy… they asked to see the evidence that the policy was being enforced.

The Governance Spine

When I evaluate an organization’s governance posture (AI, identity, data, anything) I look for the same five layers. I call it the Governance Spine.

Appetite. Has leadership articulated what level of risk they are willing to accept, in specific, documented terms that downstream decisions can anchor to? Not "we take AI risk seriously." Specific tolerance statements with specific boundaries.

Strategy. Is there a plan that translates appetite into operational priorities? They must be sequenced, resourced, not just a wish list.

Controls. Are there mechanisms that enforce the strategy? Technical controls. Process controls. Things that actually prevent or detect noncompliance without depending on individual human discipline.

Evidence. Do those controls generate artifacts that prove they are working? Logs, attestations, metrics, things an examiner can independently verify without asking the institution to reconstruct anything afterward.

Reporting. Does that evidence roll up into something leadership can act on? Not a dashboard nobody looks at. A structure that creates accountability by putting the right signal in front of the right person at the right cadence.

Five layers. Most organizations have the first two or three... maybe. They’re generally missing the bottom two entirely.

They may have the policy, an appetite, and strategy. They often do not have the architecture (the controls, evidence, and reporting) that make the policy real.

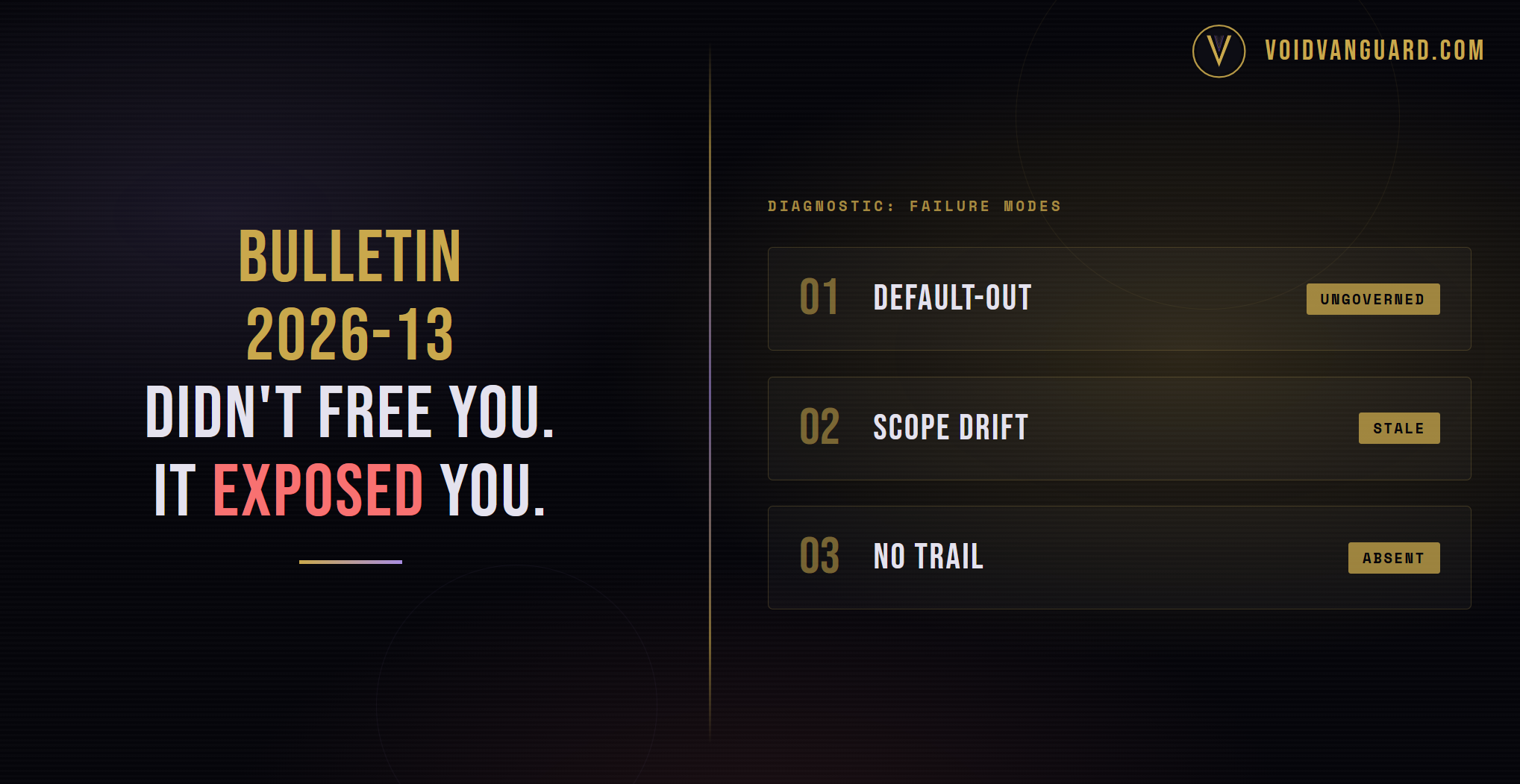

Why This Matters More for AI

Two factors make the policy-architecture gap more dangerous for AI than for traditional IT governance, and they compound each other.

The first is speed. AI adoption is not happening on your governance timeline. It is happening on your employees’ curiosity timeline, which is roughly the duration of a coffee break. Every day you operate with a policy but no architecture, the gap between what your organization says and what your organization does gets wider.

The second is visibility. Traditional IT controls let you see what is deployed. You inventory it. You monitor it. Shadow AI does not work that way. Someone pastes a client contract into ChatGPT to summarize it. A developer pulls an OpenAI API key into a side project and feeds production data through it. A business analyst uses Claude to rewrite a memo that contains material non-public information. Your current monitoring architecture probably cannot distinguish between someone running a search query and someone feeding regulated data into a large language model.

A policy that says "don’t do that" paired with a control environment that cannot tell you whether anyone is listening is not governance. It is a liability with a letterhead.

The Shift

Moving from policy to architecture starts with one honest question:

For every statement in your AI policy, can you point to a control that enforces it, evidence that proves it is working, and a report that tells leadership when it is not?

If the answer is no and for most organizations right now, the answer is a definitely no. That is the gap. That is the work.

You don’t need a perfect framework on day one. You need a spine. Structural integrity that turns intent into evidence.

Start with one use case. One AI tool your organization has actually approved. Build the governance architecture around it. What is the control? What evidence does it generate? Who sees the report? What happens when something deviates?

That is one vertebra. Build the next one.

That is how you go from a document that describes governance to a system that delivers it.

Bottom Line

If someone asks whether your organization has AI governance, and your answer is "yes, we have a policy," that is not a yes. That is a hope.

Governance isn’t a document. It’s a mechanism. Mechanisms either exist or they don’t.

Frequently Asked Questions

What is the difference between an AI policy and AI governance architecture?

An AI policy is a written declaration of intent: what the organization allows, prohibits, and expects. An AI governance architecture is the system of controls, evidence artifacts, and feedback loops that makes the policy operational and verifiable. A policy without an architecture cannot be enforced, cannot be tested, and cannot be defended in front of an examiner. Most organizations confuse the two and assume that writing the policy completes the governance work. It does not.

What is the Governance Spine?

The Governance Spine is a five-layer structural framework: Appetite, Strategy, Controls, Evidence, Reporting. Each layer traces to the one below it. Risk appetite defines what the organization is willing to accept. Strategy translates appetite into operational priorities. Controls enforce the strategy. Evidence proves the controls operated. Reporting surfaces evidence to decision-makers. Organizations may have the first two layers and are missing the bottom three which means they have a policy and nothing to prove it is working, and more importantly no visibility to know it’s not working.

How do I know if my AI governance is a policy or an architecture?

Ask one question: for every statement in your AI policy, can you point to a control that enforces it, an evidence artifact that proves the control operated, and a report that surfaces deviations to leadership? If the answer is no for any statement, that statement lives in the policy layer only. Most AI policies in 2026 are entirely policy-layer with no architecture underneath, which is why AI governance findings are about to become the most common class of examination finding in regulated industries.

What does an AI control actually look like in practice?

A real AI control is a mechanism that either prevents or detects a specific behavior without depending on individual human discipline. Examples: a data loss prevention rule that blocks "Restricted" classified data from being pasted into a public LLM browser extension; a Non-Human Identity registration requirement that forces every deployed AI service account into SailPoint IdentityNow with an owner, a supervisor, and a defined use case; an OAuth scope review gate at deployment that requires explicit approval before an AI tool can access production data. Each of these is a control because it produces a verifiable outcome without relying on someone remembering a policy.

What is "shadow AI" and why is it a governance problem?

Shadow AI is unauthorized or uninventoried AI use happening inside an organization. Employees pasting data into ChatGPT, developers calling OpenAI APIs from side projects, business analysts running analysis through Claude. It is a governance problem because traditional IT inventory and monitoring systems cannot see it. The AI interaction looks like normal web traffic. The sensitive data leaving the organization looks like normal clipboard behavior. Without an identity-aware data protection layer that classifies data at the source and gates retrieval by identity, shadow AI is invisible until something goes wrong and the institution discovers the breach retroactively.