The Void: No Mechanism, No Guardrails, No Shared Language

The most consequential AI governance decision of the year wasn't made in a legislature, a standards body, or an international summit. It was made in a contract negotiation between a defense secretary and a startup CEO and it fell apart in less than a week.

If you watched the Anthropic-Pentagon situation unfold, you've probably seen it framed as a political story. A culture war proxy. A loyalty test dressed up as procurement policy. And there's no shortage of that angle to go around the "woke AI" rhetoric, the supply-chain risk designation historically reserved for foreign adversaries like Huawei and Kaspersky, the executive order reportedly incoming to rip Anthropic products from every federal agency.

But that framing misses the structural problem entirely.

This isn't a story about politics. It's a story about what happens when there is no governance mechanism and everyone involved is forced to improvise one in real time.

What Actually Happened

Anthropic had a $200 million contract with the Department of Defense. Its models were deployed across classified networks through Palantir. Claude was reportedly used in the planning of a military operation in Venezuela. When Anthropic raised concerns about specific use cases — autonomous weapons and mass domestic surveillance — the Pentagon responded with a binary demand: allow unrestricted use for "all lawful purposes," or lose the contract.

Anthropic refused. The Pentagon designated it a supply-chain risk. Trump ordered all federal agencies to stop using Anthropic products immediately. OpenAI signed a replacement deal within hours. One of OpenAI's senior executives resigned in protest days later, calling it a governance failure.

Every single actor in this sequence was operating without a mechanism. No binding framework. No shared control taxonomy. No escalation path. No evidence architecture. Just contract language, leverage, and press releases.

When Caitlin Kalinowski — the OpenAI hardware lead who resigned — described the situation, she didn't frame it as ideological. She called it what it was: a governance failure. The deal, she said, was rushed without the guardrails defined. That's not a political statement. That's a mechanism design observation.

The Governance Gap Is the Story

The Chatham House analysis put it plainly that most consequential decisions about how AI can and cannot be used- whether it can target or kill without human oversight, whether it can surveil at scale without judicial authorization are being made in contract negotiations, not in governance frameworks.

Think about that through the lens of any regulated industry.

Nuclear technology has binding international treaty regimes. Aviation has the ICAO framework and national regulators with enforcement authority. Pathogen research has biosafety levels, institutional review boards, and international protocols. Each of these is imperfect. But each provides something that AI governance currently does not: a mechanism layer between policy intent and operational deployment.

In the Anthropic-Pentagon dispute, that mechanism layer simply didn't exist. So the contract itself became the de facto governance instrument. And when the two parties couldn't agree on the contract, the response wasn't a governance process, it was a power play.

What This Looks Like Through a Governance Spine

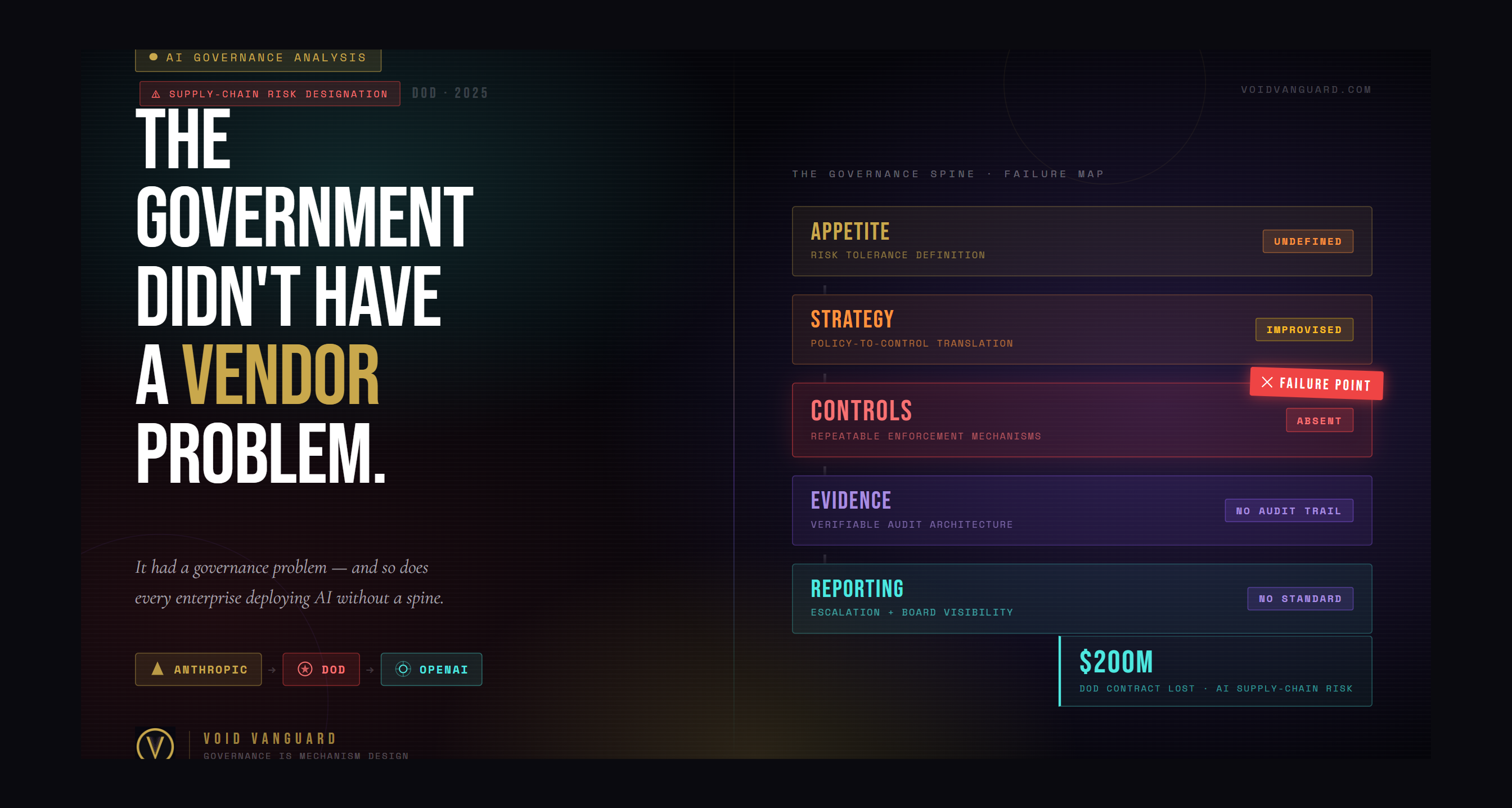

At Void Vanguard, we evaluate governance through a five-layer spine: Appetite → Strategy → Controls → Evidence → Reporting.

Apply it to this situation and the failure modes become immediately visible:

Appetite

The federal government has not articulated a coherent risk appetite for military AI. "All lawful purposes" is not an appetite statement — it's a blank check. It tells you nothing about what risk the organization is willing to accept, what it considers out of bounds, or how tradeoffs should be evaluated. Without a defined appetite, every subsequent governance decision is ad hoc.

Strategy

There is no published national strategy that maps AI capabilities to acceptable military use cases with defined boundaries. The Delhi AI Summit declaration was, by most accounts, aspirational and non-binding. The EU AI Act carves out national security. The U.S. has no federal law governing military AI use. Strategy requires specificity. What exists today is aspiration.

Controls

This is where the failure is most acute. A control is an enforceable activity within a mechanism. In this case, the only "control" was a contractual clause — and the parties couldn't agree on its language. There was no independent review body. No technical enforcement layer. No escalation protocol. When Anthropic raised concerns about the Venezuela operation through Palantir, the resolution mechanism was a phone call.

Evidence

No public evidence architecture exists to verify how AI systems are actually being used in military operations. There is no audit trail accessible to oversight bodies. No attestation framework. No evidence loop.

Reporting

Who reports to whom? Under what cadence? Against what benchmarks? The answer, for military AI governance in the United States right now, is: nobody reports to anybody, on no schedule, against no standard.

Every layer of the spine is either absent or operating on improvisation. That's not a vendor dispute. That's a governance vacuum.

The Mechanism Design Problem

The deeper issue and the one that will outlast this particular news cycle is that we are deploying frontier AI systems into the highest-stakes operational environments on earth without any mechanism design.

A mechanism, in the way we use the term at Void Vanguard, is a designed, repeatable system that produces predictable governance outcomes. It's not a policy document. It's not a set of principles. It's not a press release about red lines. It's the operational architecture that ensures policy intent translates into verifiable, defensible behavior.

Right now, the entire AI-military governance model consists of: voluntary corporate principles (which vary by company and can change overnight), contract language (which is negotiated bilaterally, behind closed doors, with no independent oversight), and executive action (which can designate you a supply-chain risk on a Friday afternoon).

None of these are mechanisms. They're all just artifacts of power dynamics.

What Would a Mechanism Look Like?

At minimum:

- A risk appetite framework that distinguishes between acceptable and unacceptable military AI applications with specificity, not slogans.

- An independent technical review body with authority to evaluate deployment contexts before they occur.

- An evidence architecture that creates auditable, tamper-resistant records of how AI systems are used in operational settings.

- Defined escalation protocols that don't depend on a CEO calling a contractor when something feels wrong.

- Binding commitments that survive changes in political leadership, because a governance mechanism that evaporates every four years is not a mechanism at all.

This isn't utopian. This is how every other high-stakes technology domain operates.

Why This Matters Beyond Defense

If you work in AI governance at a financial institution, a healthcare system, or a mid-market enterprise, you might be tempted to view this as a defense-sector problem. It isn't.

The same structural gap exists in every organization deploying AI without a governance mechanism. The same failure modes apply:

- No articulated risk appetite for AI use cases

- No control taxonomy mapped to specific deployment contexts

- No evidence architecture that creates auditable records of AI behavior

- No escalation path when a model does something unexpected

The Pentagon just demonstrated what happens at scale. The dispute isn't about Anthropic or OpenAI or Pete Hegseth. It's about the total absence of the governance infrastructure that should have made this situation impossible in the first place.

If your organization is deploying AI and your governance model consists of a usage policy and a hope that your vendor's principles hold up under pressure — you're operating in the same void.

The Bottom Line

Anthropic drew a line. The government drew a different one. OpenAI walked through the gap. An executive resigned. A lawsuit was filed. Employees at rival companies filed amicus briefs in support of the company their employers are competing against.

None of this should have been necessary. All of it was inevitable because when there is no mechanism, every decision becomes a power struggle.

Governance is mechanism design, not compliance theater. And what the Anthropic-Pentagon dispute reveals, more clearly than any white paper or framework document ever could, is that we haven't even started building the mechanisms that matter.