Picture this. You’re sitting across from the examiner who just asked a question that ended a room full of executives mid-sentence: "Show me the evidence that this AI system was approved at the appropriate governance level."

Could you? The system has been running for eight months. It touches customer data. It influences a downstream lending workflow. The best anyone could produce was a Slack thread where a VP said "looks good, let’s roll it out."

That is not governance. That is an MRA waiting to be written.

If you are a CISO, CRO, or CTO at a community or regional bank right now, this scenario is not hypothetical. It is the most predictable regulatory event on your horizon, and the window to get ahead of it is narrower than most people realize.

What do federal examiners actually test in AI governance? Federal examiners do not test whether you have an AI policy. They test whether your institution can produce contemporaneous, system-generated evidence that AI systems were approved at the correct governance level, classified by risk tier, and monitored with traceable artifacts. The question is not what the policy says. It is what the walkthrough reveals.

The Convergence You Can Get Ahead Of

Two forces are defining what AI governance looks like for community banks in 2026. The OCC named AI and emerging technology risk as a supervisory priority in the 2024 Semiannual Risk Perspective — and that language sharpens over time, not softens. The NIST AI Risk Management Framework has established the vocabulary regulators are converging on: Govern, Map, Measure, Manage. It is not a mandate yet, but it is rapidly becoming the lens through which examiners evaluate whether an institution has a governance architecture or just a governance policy.

The trajectory is the same one cybersecurity governance followed a decade ago. Institutions that built the architecture early had 12–18 months of evidence compounding by the time expectations crystallized. If you lived through that transition, you know which side of it you want to be on this time.

What Examiners Actually Ask

From a decade of preparing governance programs for examination cycles at a financial institution moving through a bank charter acquisition into federal supervision, and from the conversations I have had with CISOs and CROs preparing for their first AI-focused exam cycle, examiner questions about AI governance cluster into four specific areas. None of them are about whether you have a policy.

AI Inventory and Classification

Can you produce a complete inventory of AI tools, models, and integrations in use across your institution — including the ones embedded in vendor platforms your team did not realize had AI features? The CRM vendor’s new "smart summary" button. The core banking platform’s anomaly detection module. The document management system’s classification AI. Every one of them is AI. Every one of them needs to be in the inventory, classified by risk tier. If the examiner asks "what AI is your bank running?" and the answer takes more than a few seconds of silence to begin, that is a finding in progress.

Decision Authority

Who approved the deployment of each AI system? At what governance level? Is there documentation showing the approval authority matched the risk tier? The examiner is not asking if you have an AI policy. They are asking if you have a decision authority structure — and whether it has ever been exercised.

Evidence of Governance, Not Just Documentation

Examiners have read enough policy documents to know a policy is not a control. They want evidence that governance is operational: meeting minutes with actual decisions recorded, approval artifacts with signatures, monitoring outputs that show someone is watching. "We have an AI governance policy" and "we can prove our AI governance program is functioning" are separated by an ocean of operational maturity. Examiners test for the latter. And they test for it by pulling samples, not by reading the binder.

Model Risk Overlap

Examiners are probing whether you have performed a Model vs. Non-Model Determination for each AI system in your environment. The interagency model risk guidance (most recently revised in OCC Bulletin 2026-13, April 2026) defines a model as a complex quantitative method that produces estimates for business decisions — a credit scoring algorithm qualifies, a meeting summarizer does not. Critically, the revised guidance explicitly excludes generative AI and agentic AI from its scope. The examiner’s question is not whether every AI tool is a model. It is whether you evaluated the question for each one, documented the rationale, and can produce the Determination on request — including for AI systems the published guidance chose not to cover. An institution that never performed the Determination is the one that produces the finding.

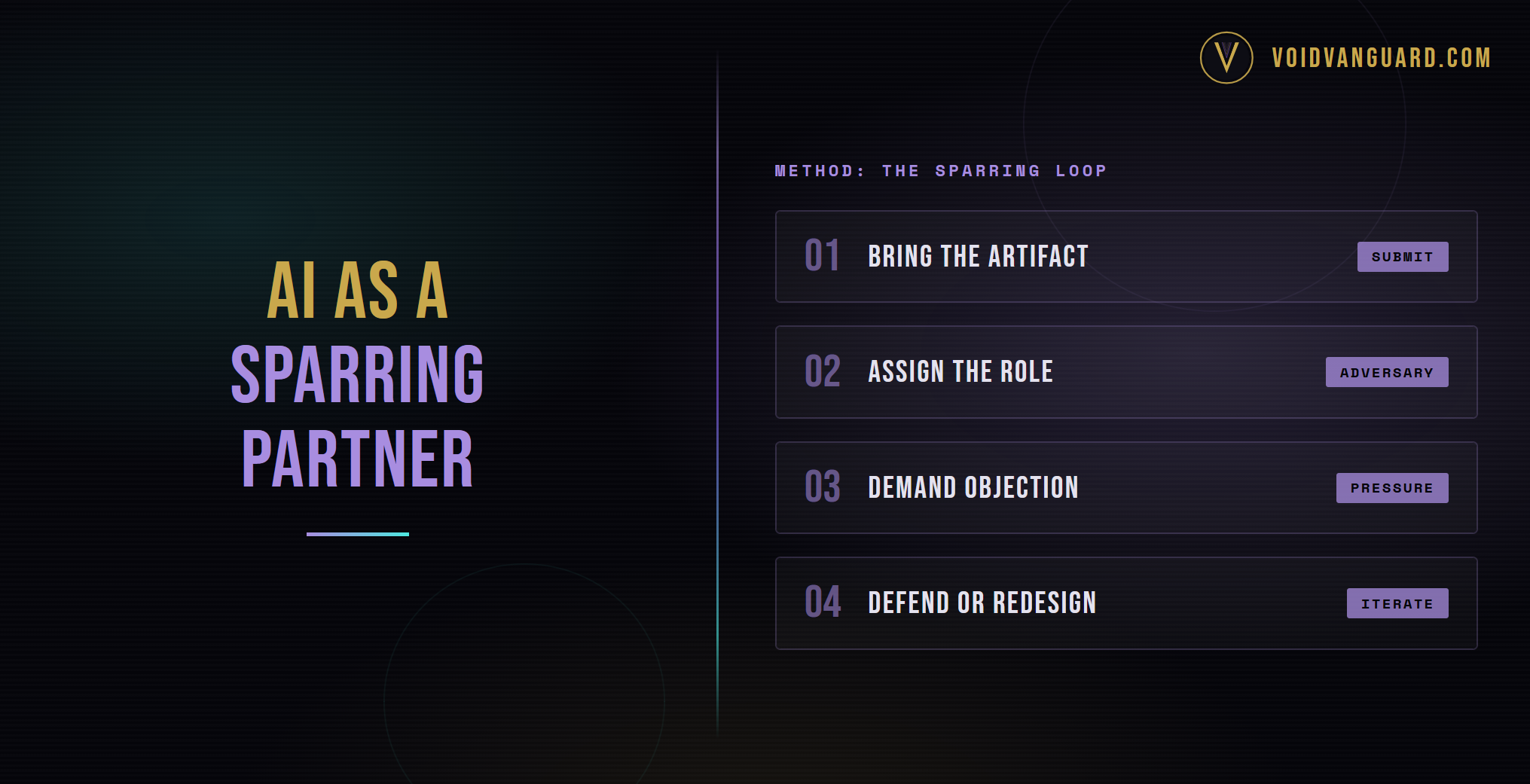

The V³ Diagnostic

I structure every AI governance diagnostic around three vectors. Not because frameworks are interesting, but because these are the three operational questions that examiner scrutiny ultimately reduces to in practice. Together, the vectors form the visible face of V³ — the Void Vanguard Domain Assessment framework. It maps to how the NIST AI RMF functions translate into operational governance: Visibility aligns with Map, Velocity with Measure and Manage, Verification with Govern’s evidence and reporting requirements.

Visibility: Can You See It?

A complete AI inventory. Risk classification. Named ownership. If you cannot enumerate every AI system in your environment and name the person accountable for each one, you have a visibility gap the examiner will find before you do.

Velocity: Can You Move on It?

Decision authority, access controls, policy enforcement, incident response capability. Governance that exists only in documents — without operational enforcement mechanisms — is governance theater. Examiners can tell the difference in about ten minutes.

Verification: Can You Prove It?

Attestation cycles, audit trails, monitoring outputs, examiner-ready documentation. Every control must generate its own evidence, or it is an assertion without proof. Assertions do not survive examinations.

What to Do in the Next 90 Days

Days 1–30: Visibility

Conduct the AI census. Identify every AI tool, model, and vendor integration you can see directly — what your employees downloaded, what your IT team deployed, what AI features are activated in platforms you already run. For vendor-embedded AI, initiate the outreach in Week 1 but do not hold the census hostage to vendor response timelines (those typically run 30–60+ days). Classify what you can see, flag what requires vendor confirmation, and build the evidence that you asked the question. This alone puts you ahead of the majority of community banks.

Days 31–60: Velocity

Establish decision authority. Define who can approve, deploy, modify, and retire AI systems by risk tier. Document the first round of retroactive approvals. Build the evidence artifact that proves governance existed before the examiner asked for it.

Days 61–90: Verification

Activate the evidence loop. Ensure every governance control produces a traceable artifact. Run the first governance review cycle. Produce the first board-ready report that demonstrates AI governance posture with evidence, not narrative.

This is not a multi-year transformation. It is a 90-day sprint that produces a defensible foundation. The institutions that build governance architecture now will have 12–18 months of evidence by the time examiner expectations fully crystallize. Those that wait will be building under examination pressure — reactive, rushed, and without the evidence trail that only time can produce.

Bottom Line

The distance between "we need AI governance" and "we can prove we have it" is shorter than most institutions think. A 90-day sprint that produces a defensible foundation — inventory, decision authority, evidence loops, board reporting — is achievable inside a single quarter. The institutions that build it now will have 12–18 months of compounding evidence by the time examiner expectations fully crystallize.

Every community bank in the country will have this conversation in 2026. The only variable is whether your institution arrives with architecture or with a policy binder.

Frequently Asked Questions

What AI does my community bank already have that I should be inventorying?

More than you think. Your core banking platform probably has embedded AI for anomaly detection or fraud flagging. Your CRM likely has AI-powered summarization or customer insights. Your document management system may have classification AI. Your collaboration tools almost certainly have generative AI features that were turned on by the vendor without a governance review. The AI census is not just about what your team downloaded — it is about what your vendors embedded in systems you were already paying for. Expect to find 3–5x more AI exposure than your initial guess.

What is the difference between an AI policy and AI governance that examiners will accept?

An AI policy is a written declaration of what should happen. AI governance is the operational machinery that makes it happen and generates evidence as a byproduct. Examiners test the latter, not the former. If your AI governance posture is "we wrote a policy and the board approved it," that is a policy layer with no architecture underneath. Examiners look for four things: inventory with risk classification, decision authority with documented exercises, evidence with traceable artifacts, and reporting with a defined cadence. Miss any of the four and the program fails the walkthrough.

How does the revised model risk guidance apply to AI systems at community banks?

The revised interagency guidance (OCC Bulletin 2026-13, April 2026) narrows the model definition and explicitly states that generative AI and agentic AI are not within its scope. A credit scoring algorithm still qualifies as a model. A meeting summarizer does not. But the critical nuance: generative AI tools like ChatGPT, Copilot, and LangChain-based systems are explicitly excluded from the model risk framework entirely. This does not mean they are ungoverned — it means there is no published federal framework covering them, which makes your own governance architecture the only defensible position. The governance action is a Model vs. Non-Model Determination for each AI system: evaluate it against the current interagency definition, document the rationale, and for AI systems that fall outside the guidance’s scope, anchor your governance to NIST AI RMF (Govern, Map, Measure, Manage) as the recognized framework for AI risk management.

How long do I have before examiners start asking these questions?

The 2024 OCC Semiannual Risk Perspective named AI as a supervisory priority, and that language sharpens rather than softens over time. If your bank has an exam cycle in 2026, the probability of AI-focused questions is high. If your cycle is in 2027, the probability is near certain. Either way, the 90-day sprint to a defensible foundation needs to start now, because 12–18 months of evidence compounds in ways that a panicked pre-exam scramble cannot replicate.

What is V³ Domain Assessment?

V³ is the Void Vanguard Domain Assessment framework. It evaluates governance across three vectors — Visibility, Velocity, Verification — with nine specific domains underneath them at the full matrix level. For AI governance specifically, the three vectors map to the examiner’s core questions: can you see the AI environment, can you act on it with decision authority and enforcement, and can you prove it with evidence. The framework is what I use on every diagnostic I run for mid-market regulated institutions.