I have had a version of this conversation at least a dozen times. A CISO or VP of IT Security sits across from me and says some version of the same thing: "We deployed SailPoint. We deployed CyberArk. We're on Entra ID. And the examiner still issued the same findings."

There is always frustration in the voice. Usually some anger directed at the tool vendor. Sometimes at the implementation partner. Occasionally at the examiners themselves.

But the tools are not the problem. They never were.

I spent over a decade building IAM, PAM, and security governance architecture at a $3.5B financial institution through its transition to federal supervision via a bank charter acquisition. The tools were necessary. They were also never the thing that produced the clean finding.

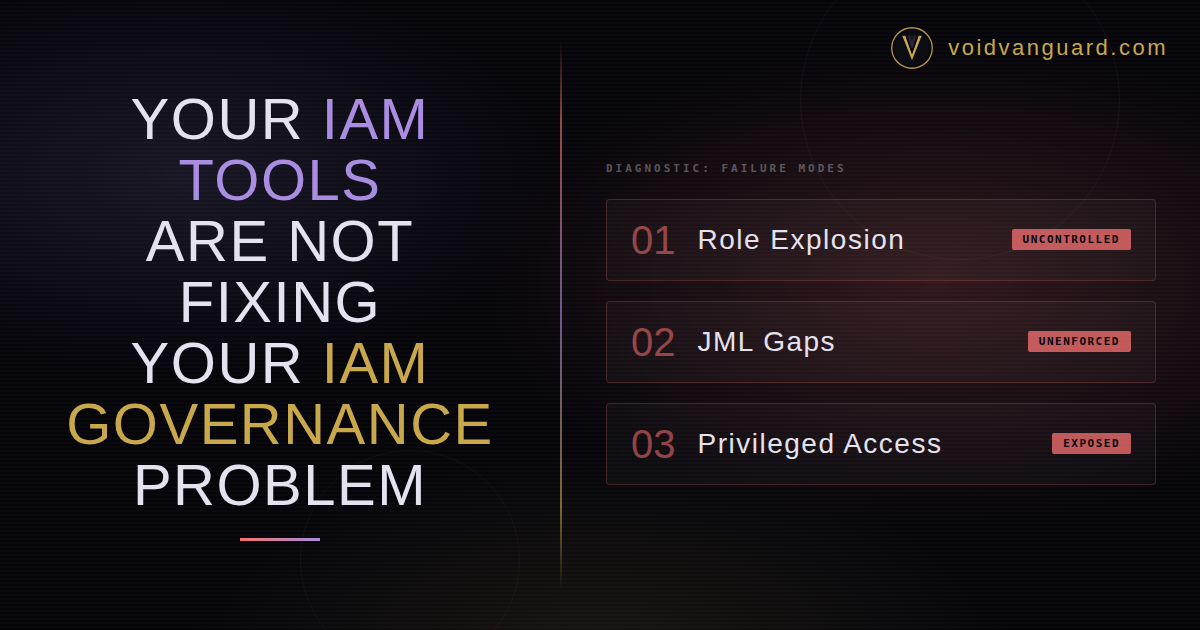

What is the tool-governance gap? IAM tools are control enforcement engines configured to automate whatever governance architecture sits underneath them. When the architecture is missing, incomplete, or poorly designed, the tool automates the failure at enterprise scale — reliably, with audit-quality evidence of its own operation. The gap between the tool's capability and the governance it was asked to enforce is where findings live.

The Tool-Governance Gap

IAM tools are control enforcement engines. They do exactly what they are configured to do — reliably, at scale, on schedule. SailPoint will execute your access reviews exactly as configured. CyberArk will vault and rotate credentials exactly as configured. Entra ID will enforce conditional access policies exactly as configured.

The problem is what they are configured to enforce.

If the governance architecture underneath the tool is broken, the tool automates a broken process. Faster. More reliably. At scale. It does not fix the underlying design failure — it amplifies it. You now have an enterprise-grade engine running at full speed on top of a governance model that was never designed.

I see this pattern so consistently that I consider it the single most reliable indicator an organization confused tool deployment with governance maturity. Three failure modes show up every time.

Pattern 1: Role Explosion Without Governance

The promise of role-based access control is elegant. Define roles that match job functions. Assign users to roles. Access flows automatically. Beautiful on a whiteboard.

The reality at most mid-market institutions: roles proliferate without governance. Fine-grained entitlements accumulate under role labels that stopped matching actual job functions 18 months ago. By year two, the institution has more roles than employees and nobody can explain why half of them exist.

SailPoint can manage 5,000 roles. That is not the question. The question is whether anyone can justify why 5,000 roles exist. Whether the role model was designed against an authoritative job code and function taxonomy. Whether there is a lifecycle mechanism — a real mechanism, not a policy — that retires roles and their subsequent access when job functions change.

When the examiner reviews the role model and asks "what governance process determines when a new role is created, who approves it, and how stale roles are identified and retired?" — the answer cannot be "SailPoint manages our roles." SailPoint manages what you put into it. If what you put into it is an ungoverned role explosion, you now have an automated ungoverned role explosion.

The Fix

The fix is not a better tool. It is role design governance: a real design effort with an authoritative job function taxonomy at the top, a role creation and approval workflow underneath it, a periodic role attestation cycle that actually produces rejections, and a retirement mechanism for roles that no longer map to active job functions. Once those four pieces exist, the tool enforces the governance. Not the other way around.

Pattern 2: JML Gaps the Tool Cannot Close

Joiner-Mover-Leaver is the lifecycle mechanism that provisions access when someone joins, adjusts it when they move, and revokes it when they leave. In theory, the most fundamental IAM control. In practice, the most frequently broken.

The failure mode is almost never "we don't have a JML process." It is "our JML process has gaps that are upstream of the tool."

The Authoritative Source Gap

The most common gap is the authoritative source itself. If your HR system is not the single authoritative source for employment status or if it covers employees but not contractors, consultants, temps, or service accounts, then your JML process has a coverage gap no tool can close. When a contractor's engagement ends and HR never records the termination because the contractor was never in the HR system to begin with, SailPoint does not know they left. The access persists. Not because SailPoint failed. Because nobody ever told it.

The Mover Problem

The second gap is the mover event. Most JML implementations handle joiners and leavers adequately. Movers: employees who change departments, roles, or reporting lines, are where access accumulates. If the mover trigger does not initiate an access recertification, the employee carries their old entitlements into the new role. Over 18 months this creates entitlement creep, which is exactly what access reviews are theoretically supposed to catch. Instead, the organization accumulates risk, blindly, at scale, with reviewers rubber-stamping certifications because they do not understand the entitlements they are approving, the reviews become ceremonial.

The design principle that made JML mechanisms actually work, in every environment I built them in, was not the tool selection. It was authoritative source integrity: ensuring the system of record for identities was complete, current, and authoritative before the tool consumed it. The tool amplifies whatever you feed it. Feed it an incomplete authoritative source and you get incomplete governance.

Pattern 3: Privileged Access Without a Governance Layer

CyberArk is excellent at vaulting credentials, rotating passwords, and recording privileged sessions. What CyberArk cannot do is answer the governance questions examiners actually ask.

Who are the privileged users in this environment? What justifies each account's elevated access? How frequently is that justification reviewed? What process removes privileged access when it is no longer needed? Where is the evidence that any of the above happened last quarter?

These are governance questions, not tool questions. CyberArk provides the enforcement layer: vaulting, rotation, session recording. The governance layer (justification, periodic review, evidence of deliberate decisions) must be designed separately and then wired into the tool.

I have watched institutions respond to an examiner's request for privileged access review evidence by producing CyberArk session recordings. That answers a different question. The examiner did not ask whether sessions are recorded. They asked whether the population of privileged users is governed... justified, reviewed, and evidenced. Session recordings prove monitoring. They do not prove governance.

Before You Blame the Tool

For any IAM control that is producing findings despite tool deployment, run three diagnostics before you call the vendor.

1. Is the authoritative source intact?

Does the system of record for identities, roles, and entitlements reflect the actual state of the organization? If it has gaps — contractors missing, stale records, mover events not triggering — no tool compensates for that. Fix the source before you tune the tool.

2. Is the mechanism designed or just described?

Is there a designed, repeatable system that produces the governance outcome regardless of whether anyone remembers the quarterly campaign is open? Or is there a policy that describes the desired state and relies on individual human discipline to achieve it? Tools enforce mechanisms. They do not create them.

3. Does the control generate evidence or activity?

There is a difference between "the access review ran" and "the access review completed with full scope, documented decisions, enforced escalation, and timestamped artifacts an examiner can walk through." The first is activity. The second is evidence. Examiners grade on the second.

The organizations that get the most value from their IAM investment are the ones that designed the governance architecture before configuring the tool — or, more commonly, paused after deployment, acknowledged the governance gaps the tool exposed, and redesigned the mechanisms before the next exam cycle.

Bottom Line

The single most common misreading of a failing IAM program is that the tool underneath it is the problem. It almost never is. SailPoint is a control enforcement engine. CyberArk is a control enforcement engine. Entra ID is a control enforcement engine. None of them are governance engines. The governance has to exist in the design of the mechanism the tool is asked to enforce, and the design has to exist before the tool is configured.

The tool is the amplifier. What it amplifies is your choice.

Frequently Asked Questions

If my organization just deployed SailPoint and we are still getting audit findings, what should we check first?

Check the authoritative source before anything else. If your HR system is not the single complete record of employment status for employees, contractors, consultants, temps, and service accounts, then SailPoint is running on incomplete inputs and every downstream control inherits the gap. The second check is the mover event: does a role change in HR trigger access recertification, or does the employee carry their old entitlements into the new role? If the answer is "they carry," you have entitlement creep that access reviews cannot catch at scale. Fix the source and the mover flow before you blame SailPoint for the findings.

What is the difference between a control enforcement engine and a governance engine?

A control enforcement engine executes whatever configuration you give it, reliably and at scale. SailPoint, CyberArk, Entra ID, Okta, and similar IAM platforms fall into this category. A governance engine is the design layer that determines what the enforcement engine is configured to execute — who is authorized, what the role taxonomy looks like, how JML events are defined, what evidence the control must produce, and how decisions are documented and reviewed. The governance engine does not live inside the tool. It is built in advance and then wired into the tool. Confusing the two is the single most common failure mode in mid-market IAM programs.

Why do access certification campaigns often fail even with SailPoint running them?

Because the tool runs the campaign mechanically, but the design determines whether the campaign is a control or a ceremony. A healthy certification campaign has tiered scoping (high-risk entitlements reviewed more frequently than birthright access), business-language role descriptions (not "GRP_SAP_MFG_MTL_WRHS_AL01"), structural escalation with auto-revocation at day 15, and a Rejection Rate between 3 and 5 percent. When reviewers cannot understand the role names, the rejection rate collapses to zero, and a zero-percent rejection rate is a rubber-stamp signal examiners detect instantly. SailPoint will run the campaign either way. The tool is not the variable.

How does CyberArk session recording differ from privileged access governance?

Session recording is a monitoring control — it captures what happened during a privileged session so that if something goes wrong, there is a record to investigate. Privileged access governance is a design discipline — it determines who should have privileged access in the first place, what justifies the elevation, how frequently that justification is reviewed, and what process removes access when it is no longer needed. Session recording is downstream of governance. An organization with complete session recording and no privileged access governance is monitoring privilege without managing it, and examiners grade on the governance side, not the monitoring side.

What is authoritative source integrity in IAM?

Authoritative source integrity is the discipline of ensuring that the system of record for identities — typically the HR Information System — is complete, current, and authoritative for every identity that touches the environment. Complete means it covers employees, contractors, consultants, temps, service accounts, and non-human identities including AI agents. Current means its data reflects the actual state of the organization within a defined lag (usually measured in hours, not days). Authoritative means no other system is allowed to create or modify identity records independently. When any of those three conditions is not met, the IAM platform downstream is operating on bad data, and no amount of tool configuration can compensate.